1.2 Analog, Digital and Discrete-Time Signals

In general, a signal is anything that can be transmitted or stored, and represents useful information.

Few examples can illustrate what information means in this context. For example, monochromatic and color images are two-dimensional (2D) and 3D signals, respectively, in which the information are the pixel values. For instance, a color image with resolution of pixels can be represented in the RGB color space1 by a 3D matrix with dimension .

A video is a sequence of images (each one called a frame) that are indexed by the integer . While an image does not depend on time, the value of in the video is interpreted as the time instant. For example, a video with 500 frames can be represented by a 4D multidimensional array (also called tensor) of dimension , in which is the image (frame) with dimension corresponding to time .

Another example of a signal is the electrocardiogram (ECG). Typically, the ECG is recorded with several channels and constitutes a multivariate or multidimensional signal , where is the time dimension. The information in the ECG corresponds to the amplitudes of each channel, which for a given can be organized as a vector . For instance, assuming six channels and a given time , the vector contains the six amplitude values.

The provided examples illustrate that there are signals with very different characteristics. To simplify the initial discussion, unless otherwise stated, it is assumed hereafter a one-dimensional real-valued signal that describes how a single amplitude varies over time.

It is useful to classify signals according to the behavior of these two variables: the independent variable representing progress in time and the amplitude, which is the dependent variable. If time evolution is represented by a real-valued variable , the function is called a continuous-time signal. If the progress over time is represented by an integer index , the sequence is called a discrete-time signal.

Similarly, the amplitude can freely assume any real value or be restricted to only pre-specified values from a finite set . In the latter case, the amplitude is said to be quantized. The quantized amplitudes can be eventually non-integer numbers. The number of elements in , i. e., the cardinality , indicates whether the system is binary (), quaternary () or -ary. A subscript will be used to denote a quantized signal, such as in .

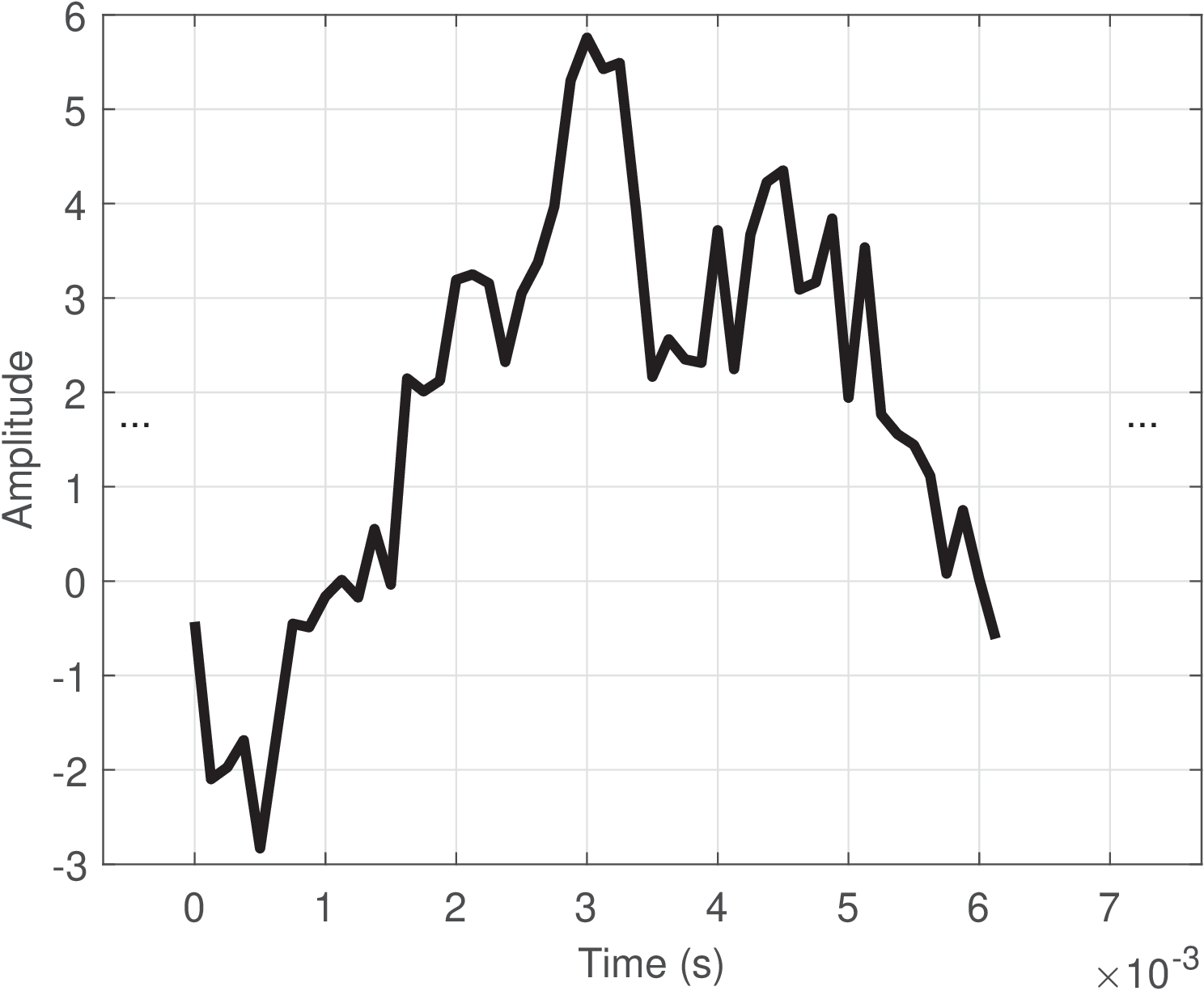

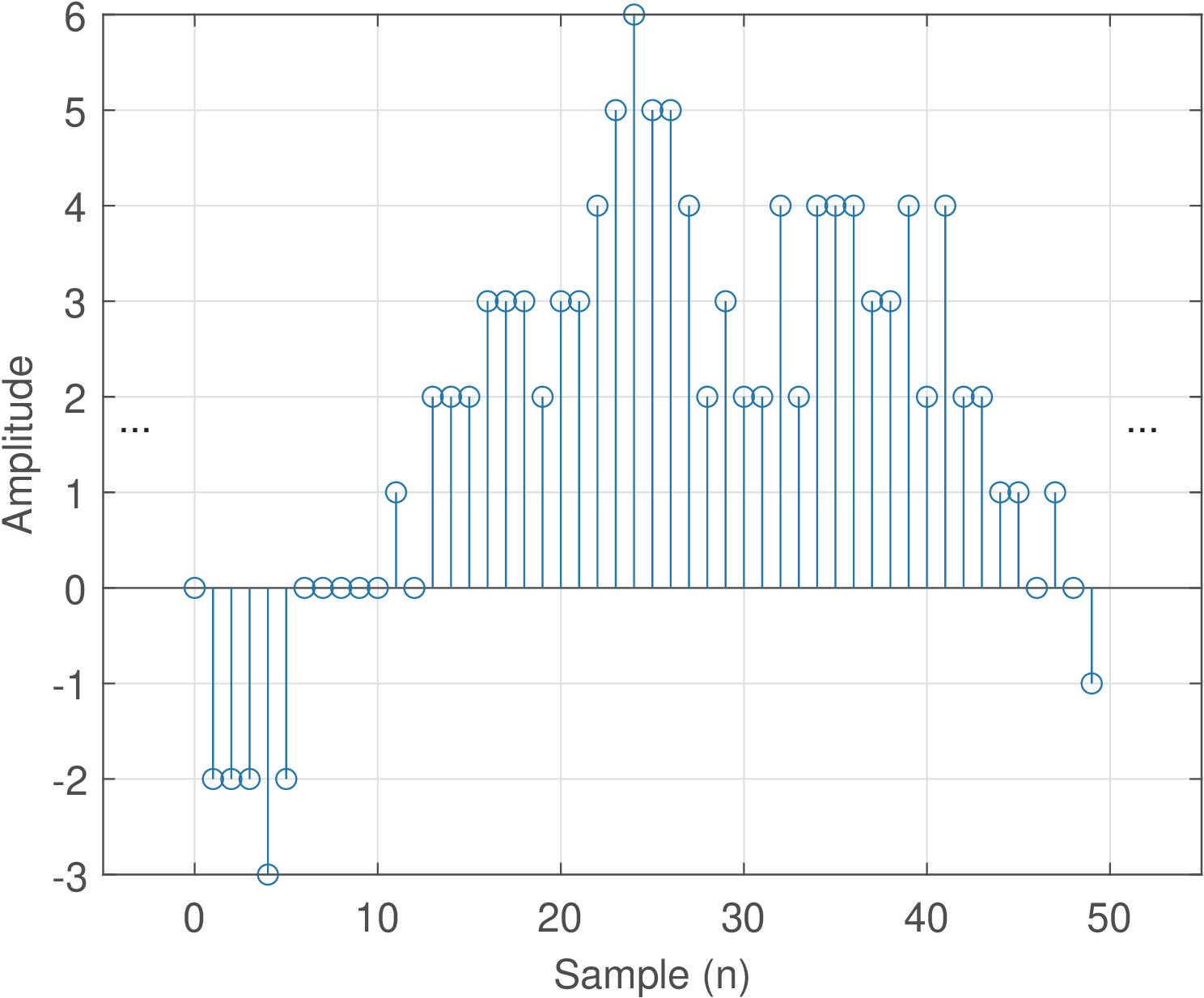

Based on the previous definitions, it is possible to define analog and digital signals, which are the most common signals in practice. A digital signal is a discrete-time signal with quantized amplitudes. An analog signal is a continuous-time signal in which the amplitudes are not quantized (are not restricted to a finite number of distinct values). Table 1.1 summarizes a useful taxonomy of signals. Figure 1.1 and Figure 1.2 provide examples of analog and digital signals, respectively.

| Continuous-time, | Discrete-time, |

|

| Quantized amplitude |

| (digital) |

| Not quantized | (analog) |

|

Signals that exist in the real-world are inherently analog. Even a DC power supply regulated to output 0 or 5 volts will present a small random amplitude fluctuation due to circuit imperfections and noise. It could then be (strictly) classified as an analog signal . But the circuits of a traditional digital system (e. g., a computer) can tolerate amplitude variations within a given range and, consequently, one may want to model such signal as continuous in time and with quantized amplitudes, denoting it as . It is also possible to find authors calling such continuous-time signal with quantized amplitudes a “digital” signal, but this nomenclature will be avoided in this text.

The interfaces between digital systems and the analog world require analog to digital (A/D) and digital to analog (D/A) conversions, which will be discussed in Section 1.7.

1.2.1 Advanced: Ambiguous notation: whole signal or single sample

It should be noted that the notation (the same happens for ) is ambiguous in the sense that it is widely used to represent both: a) the complete sequence and b) a sample at time . In most scenarios both interpretations are valid because if someone provides an equation such as

|

| (1.1) |

which is valid for all , this equation can be repeatedly used to reconstruct the whole sequence by varying , or be interpreted as a single sample for a specific value . In some cases, to disambiguate the two interpretations, a notation such as or is adopted, where and denote specific time instants instead of a generic variable that can be iteratively used to represent the complete sequence.2

1.2.2 Digitizing signals

In many digital signal processing applications, it is required to convert a real-world analog signal into a digital format, and then process it with a computer, microcontroller, FPGA (field programmable gate array) or digital signal processor (DSP) chip, for example. Therefore, a brief review of the A/D process is discussed in the sequel.

The A/D converter (or ADC chip) transforms the input analog signal into a digital signal , consisting of a sequence of quantized samples. The ADC executes two tasks:

- sampling: periodically extract samples to accurately describe the signal;

- quantization: represent each of these samples with a reasonable accuracy.

Sampling depends on the adopted sampling frequency , which is the number of samples extracted from the signal per time unit (more specifically, one second).3 For example, Hz corresponds to obtaining 8000 samples to represent each signal segment with a duration of 1 second. The higher , the more accurate the representation tends to be.

Quantization depends on the number of bits used to represent each sample. For example, if , each sample can be represented by only distinct values: 00, 01, 10 and 11. The mapping between these binary values and amplitudes is somehow arbitrary. For example, 00 can represent V while 01 can represent V. In general, an ADC of bits can output distinct quantized values.

ADCs with large values for and are more expensive. Sometimes the tradeoff is to use a relatively large with small (e.g., GHz with bits) or vice-versa (e.g., MHz with bits).

The chip that performs the digital to analog conversion is called DAC. It also operates according to the values of and .

Note that the operation performed by an ADC chip is in general lossy and, consequently, non-invertible.4 Therefore, cascading an ADC and a DAC chips recovers only an approximation of the original signal . In this text we will learn how to properly choose and to control the A/D and D/A processes, in order to recover with the accuracy that the given application demands. These two parameters are the most relevant in the A/D and D/A processes, but when choosing commercial chips, there are many others (see exercises in Section 1.16) . One important figure of merit to estimate this accuracy is the signal-to-noise ratio (SNR), which is the ratio between the power of the input and the power of the “noise” signal, which in this case is the total error caused by the sampling and quantization processing stages. When this error is solely caused by quantization, the SNR is called signal-to-quantization-noise ratio (SQNR).

1.2.3 Discrete-time signals

In practice, the A/D conversion is typically performed by a monolithic ADC chip. But for theoretical studies, it is convenient to mathematically model this A/D conversion by splitting it in the two mentioned stages: sampling and quantization. One important reason for adopting these two stages when modeling the A/D conversion is that, while sampling is a linear operation, the input-output relation of quantizers is nonlinear (you may want to take a peek at Figure 1.53 and note that the relation corresponds to a non-linear stairs function). There are several tools, such as the Laplace and Fourier transforms discussed in Chapter 2 for dealing with linear operations. On the other hand, working with nonlinear systems is more evolved. Because of that, most “digital” signal processing theory does not assume the amplitude is quantized to enable the usage of tools restricted to linear operations. Hence, more strictly, most of the DSP theory could be called “discrete-time” signal processing. However, the name “digital signal processing” is more popular.

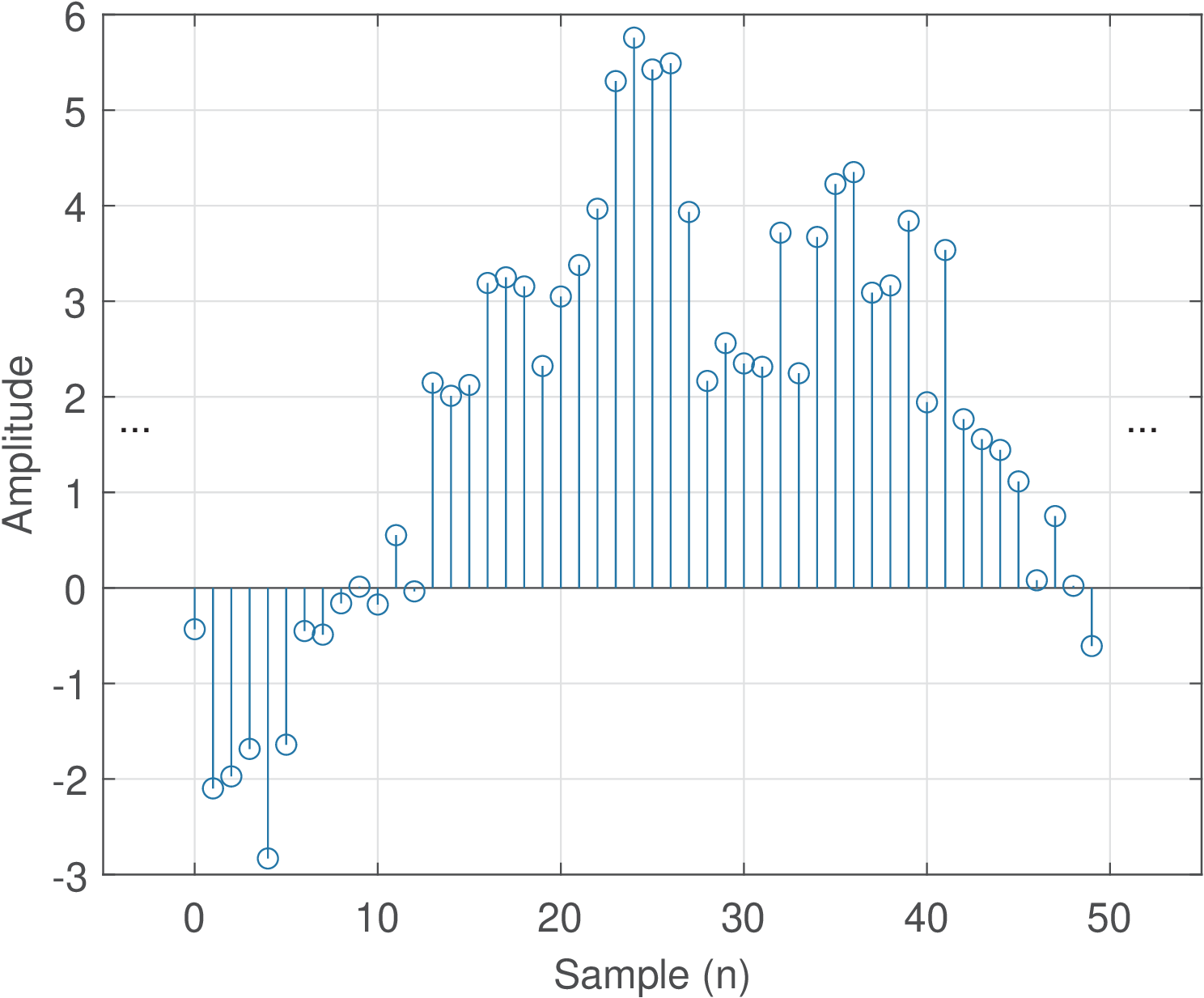

Figure 1.3 illustrates a discrete-time signal. Note the abscissa is given in discrete-time , similar to the notation adopted for digital signals. But for a discrete-time signal , the amplitude is not quantized and can assume any value. Figure 1.3 should be compared with Figure 1.1 and Figure 1.2, observing each axis.

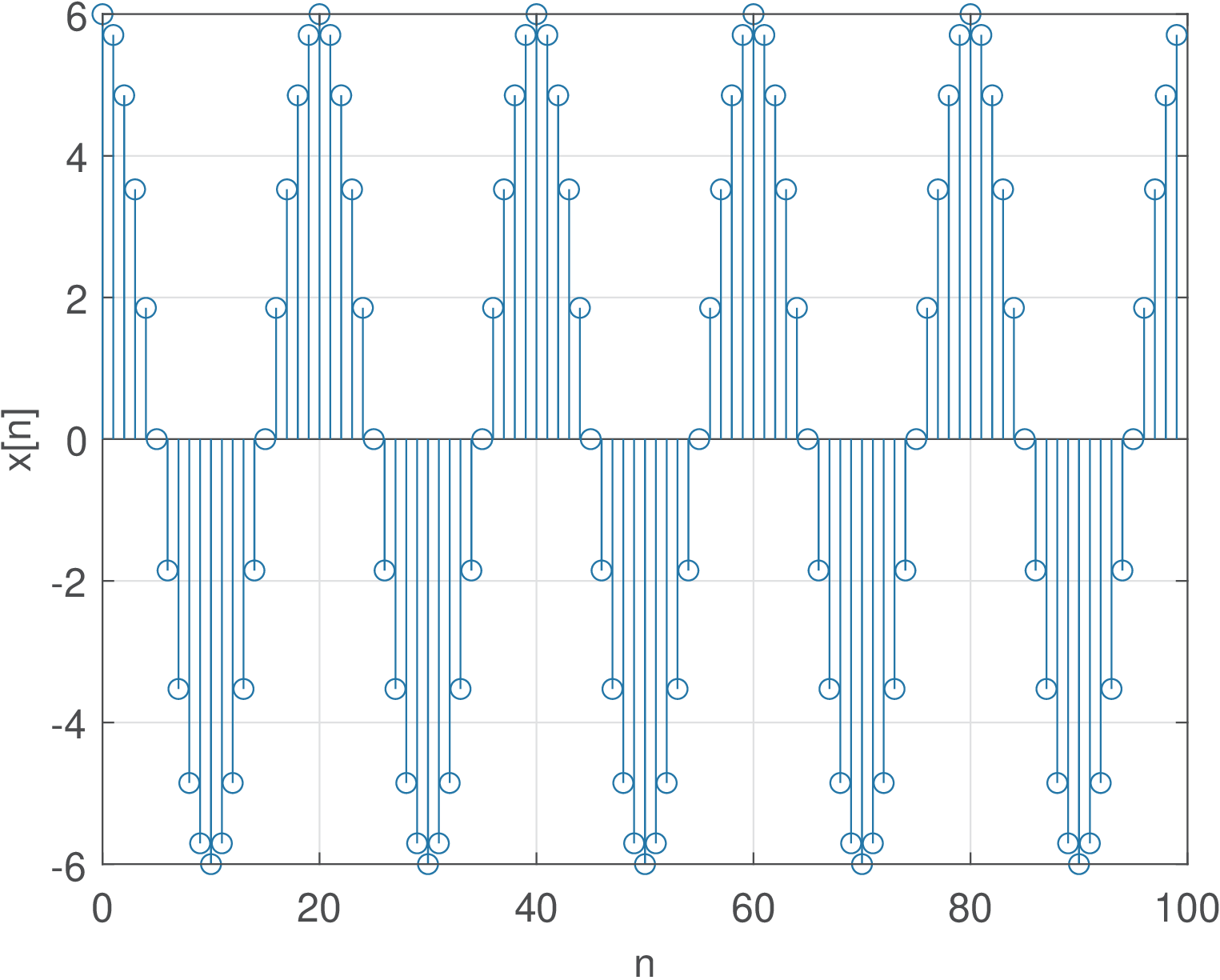

Example 1.1. Example of creating a discrete-time signal from a continuous-time sinusoid. Consider extracting samples from the analog signal with amplitude 6 volts and a frequency Hz (the subscript is just to indicate this is a specific and fixed frequency value). The goal is to use samples to represent each segment of one second, where is the sampling frequency. The time interval between consecutive samples is , which in this case is s. Listing 1.1 illustrates one possible implementation.

1Fs=8000; %sampling frequency (Hz) 2Ts=1/Fs; %sampling interval (seconds) 3f0=400; %cosine frequency (Hz) 4N=100; %number of desired samples 5n=0:N-1; %generate discrete-time abscissa 6t=n*Ts; %discretized continuous-time axis (sec.) 7x=6*cos(2*pi*f0*t); %amplitude=6 V and frequency = f0 Hz 8stem(n,x); %plot discrete-time signal

Figure 1.4 indicates the result of executing Listing 1.1. This cosine has a period of ms. Therefore, each of its period is being represented by samples. Note in Figure 1.4 that, in this specific case, at each sample , the sample at is repeated, and a new cosine cicle starts.

1.2.4 Brief introduction to frequency-domain analysis

In subsequent chapters, the task of representing signals in frequency-domain will be thoroughly discussed. But it is convenient to anticipate a very brief introduction of a key aspect of Fourier analysis: the capability of decomposing a signal into its frequency components. For instance, by inspection, a signal

|

| (1.2) |

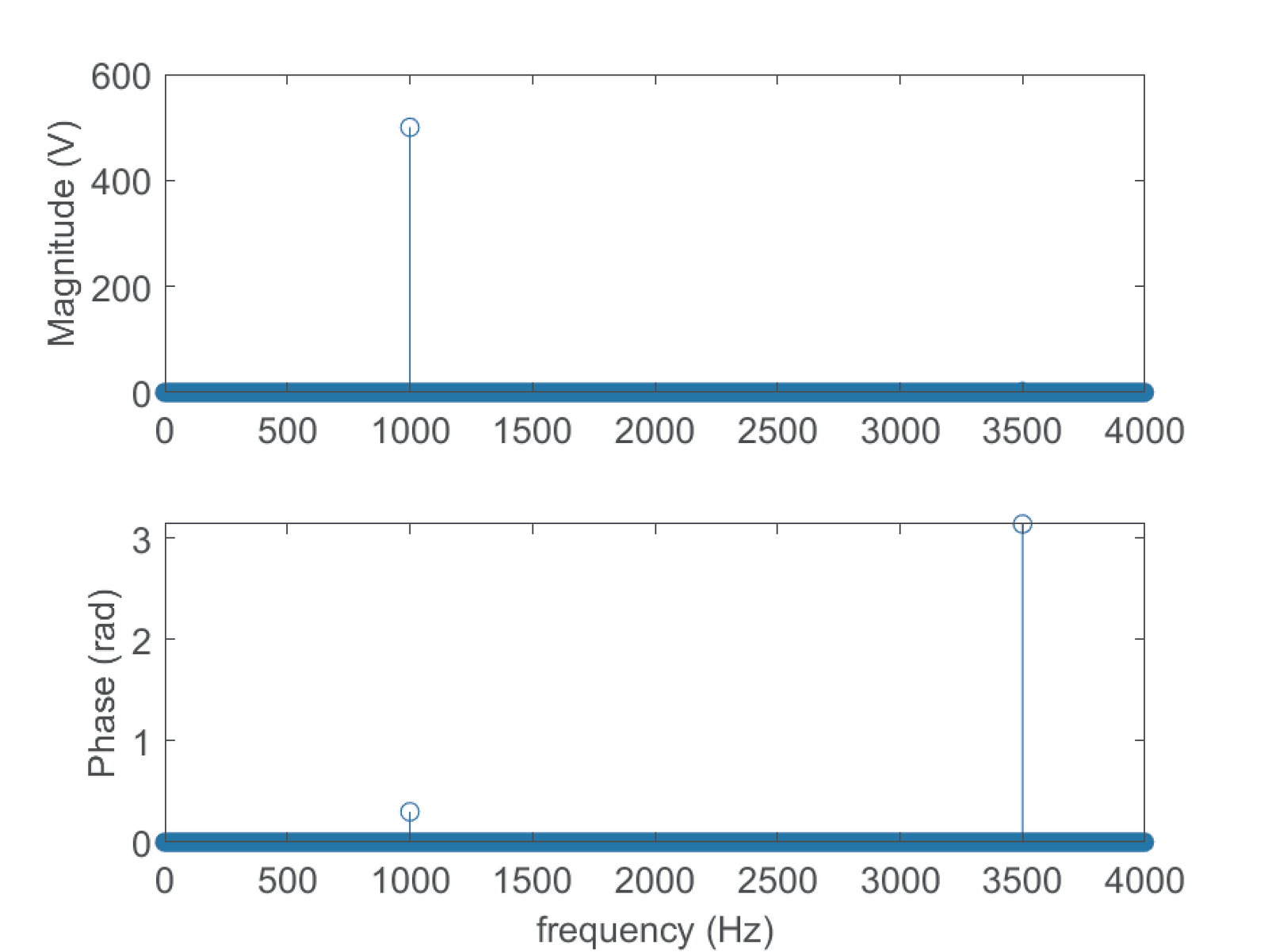

is composed of two frequency components. The first one is a cosine with an amplitude of 500 V, phase of 0.3 radians and angular frequency rad/s, which corresponds to a frequency Hz. The second frequency component has an amplitude of 2 V, phase of radians and angular frequency of rad/s (corresponding to Hz). The Fourier analysis provides exactly this kind of information about the frequency components that, when added together, creates the corresponding time-domain signal.

There are many techniques based on Fourier analysis that represent information about the frequency components of a signal. Few examples are provided below:

- Spectrum: informs the frequency, magnitude and phase of each frequency component of the corresponding signal.

- PSD: power spectral density (PSD) provides information on how the signal power is distributed over frequency.

- Spectrogram: describes how power is distributed over both time and frequency, using a representation that corresponds to calculating several PSDs over different time segments.

The frequency components of the signal in Eq. (1.2) are depicted in Figure 1.5 using two distinct graphs: one for amplitude (top) and another for phase. The plots were obtained using a duration of 14 s for but information about signal duration is not available from spectrum plots.

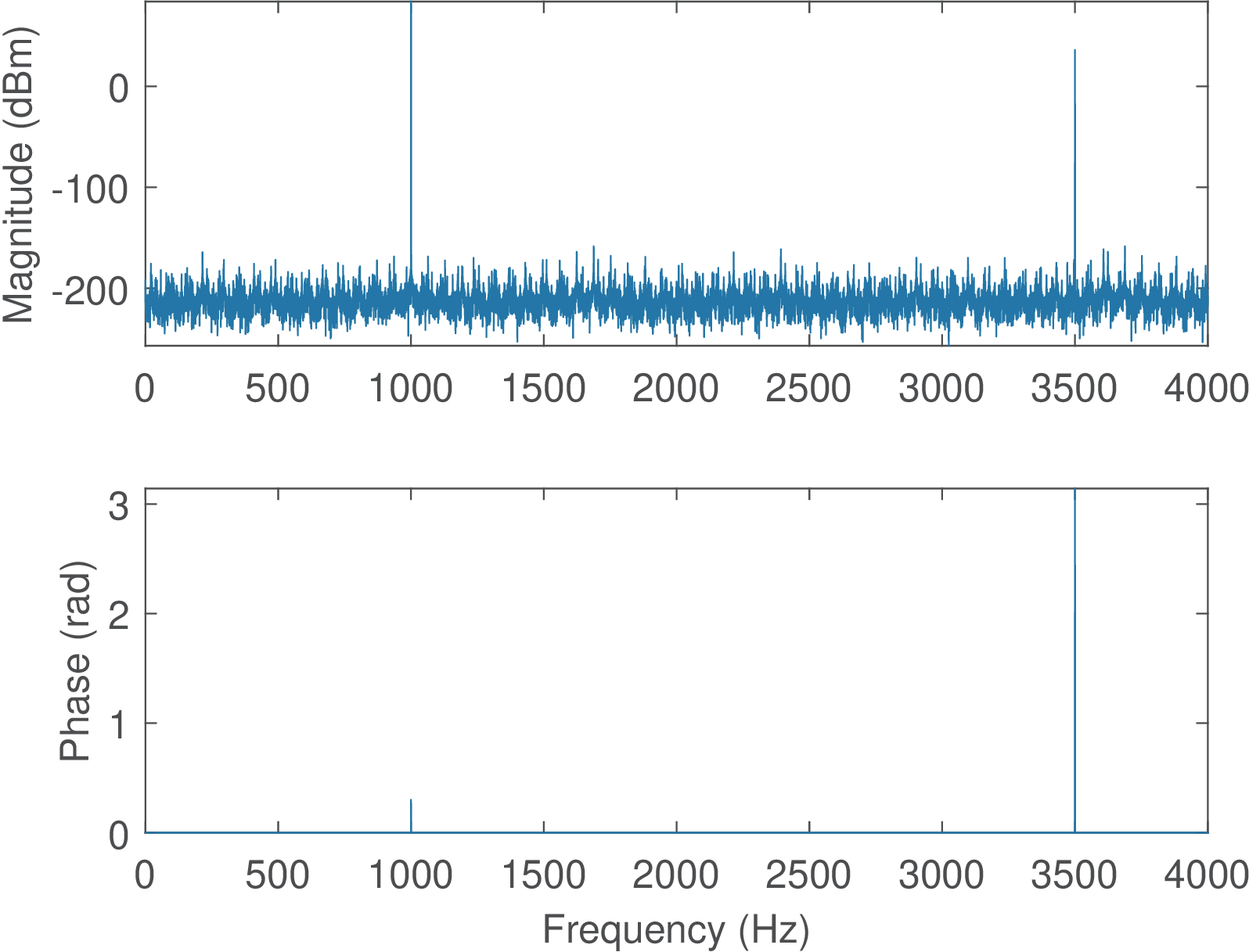

When compared to 500 V of the first component of in Eq. (1.2), the magnitude of 2 V of the component at 3500 Hz is relatively small and does not show up when the magnitude is depicted in linear scale in Figure 1.5. Alternatively, Figure 1.6 represents the magnitude in dBm and make evident the two frequency components. Another distinction is that Figure 1.6 uses Matlab’s plot method, while Figure 1.5 uses Matlab’s stem method.

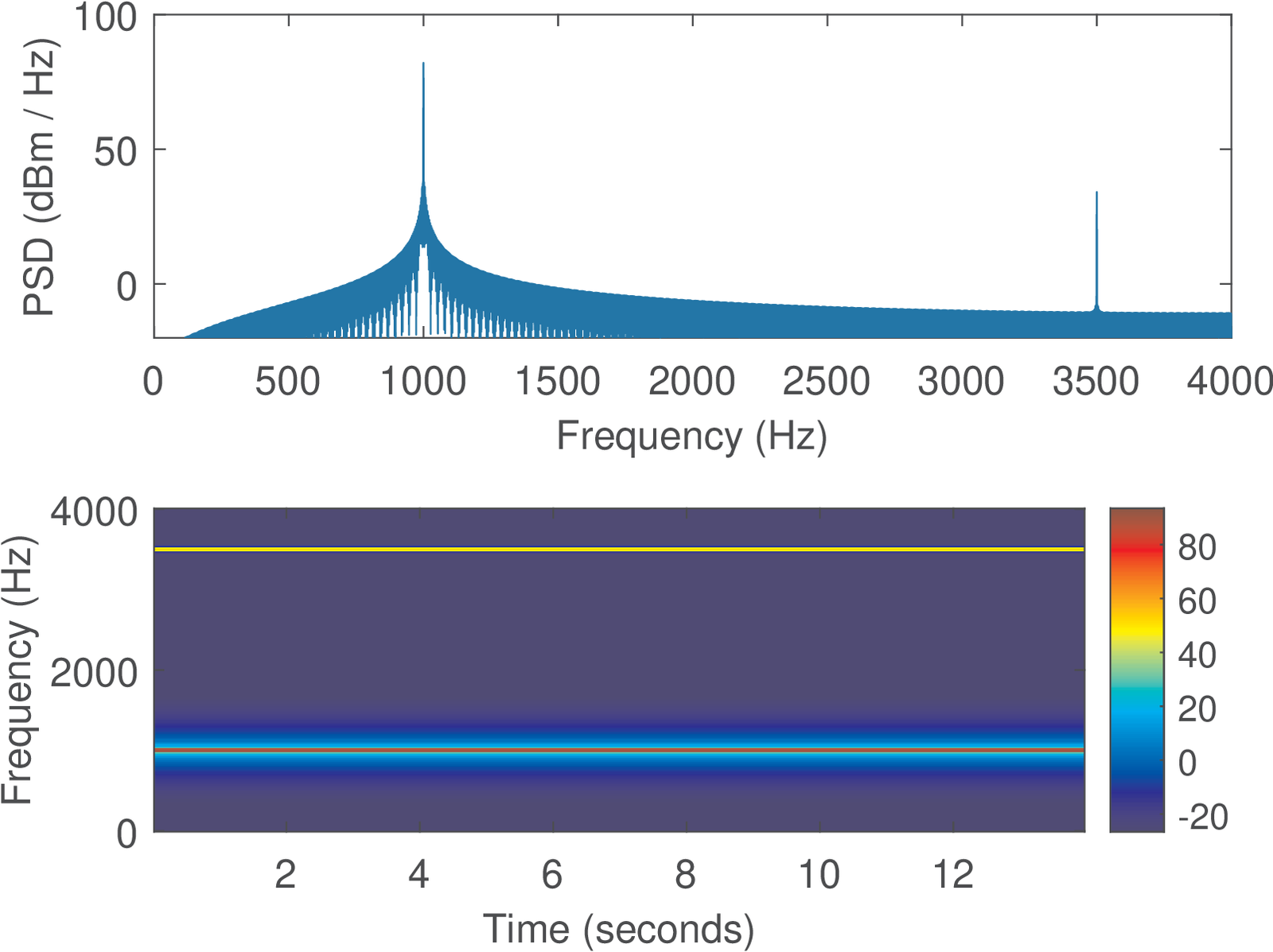

When it is not required to obtain information about the phase of the frequency components, it may be convenient to represent the frequency components using the PSD or the spectrogram. Figure 1.7 provides an example using the same signal of Eq. (1.2). In this case, the duration of 14 s can be observed from the spectrogram.

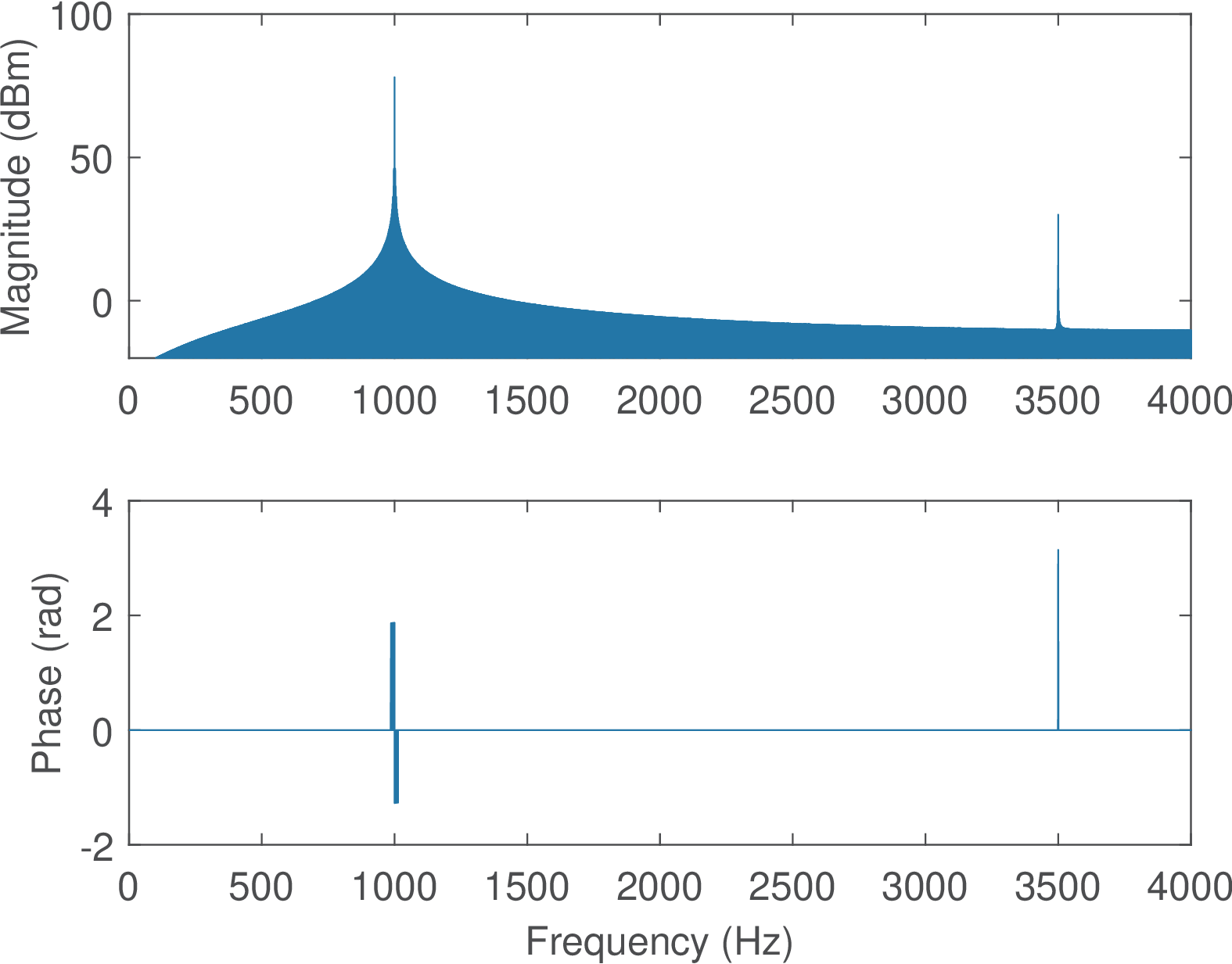

It can be seen from Figure 1.7 that the spectrogram is a redundant representation when the magnitudes of the frequency components do not change over time. To compare with Figure 1.7, it is pedagogical to consider the spectrogram of a new signal , which has the same duration of 14 s, and is composed of the frequency component along half of the time duration (7 s) and during the second half. First, Figure 1.8 shows the spectrum of the new signal .

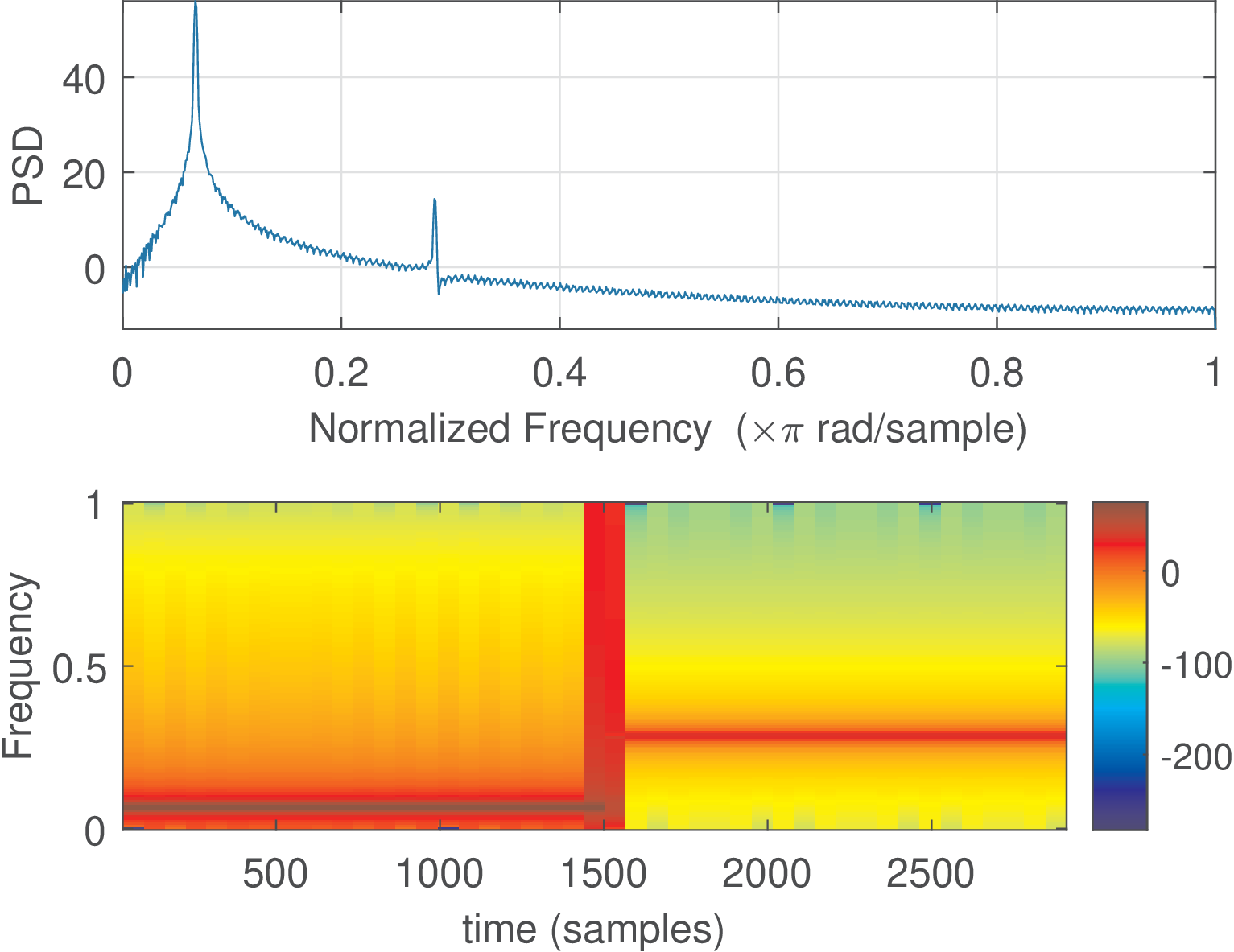

Comparing Figure 1.8 and Figure 1.6 one can notice that the two spectra are similar. The concatenation process affected primarily the phase of , which differs from the phases of the components of . Figure 1.9 depicts the PSD and spectrogram of .

The spectrograms in Figure 1.7 and Figure 1.9 indicate how the magnitude of the frequency components of and , respectively, change over time. For instance, Figure 1.9 successfully performs the job of indicating that around s an abrupt change occurred and starts to have a higher frequency (3500 Hz) with a lower amplitude (indicated by the colorbar). In contrast, the spectrum and PSD plots are capable of describing the existence of two frequency components but are unable of informing their location in time.

The spectrogram generates a representation in both time and frequency domains because it extracts segments from the input signal (e. g. ) and calculates the spectrum of each of these several segments (also called windows). The spectrogram is stored as a matrix in which an element corresponds to a specific point in the time frequency grid, and stores the magnitude of the spectrum. A sliding window is used to obtain distinct (eventually overlapping) segments, and the input parameters window shift and window length define the amount of time (or, equivalently, the number of samples of a discrete-time signal) the window is moved at each segment extraction and its total duration.

For future similar analysis, the Matlab/Octave functions ak_spectrum, ak_psd and ak_specgram can be used to estimate the spectrum, PSD and spectrogram of discrete-time signals.5

For example, Listing 1.2 was used to generate and later obtaining Figure 1.8 and Figure 1.9.

1Fs=8000; %sampling frequency (Hz) 2Ts=1/Fs; %sampling interval (seconds) 3N=Fs * 14; %number of samples assuming a signal duration of 14 secs 4n=0:N-1; %generate discrete-time abscissa 5t=n*Ts; %discretized continuous-time axis (sec.) 6t0=t(1:N/2); %first time segment 7t1=t(N/2+1:end); %second time segment 8f0=1000; %cosine frequency (Hz) 9x0=500*cos(2*pi*f0*t0+0.3); %signal segment for first half 10f1=3500; %cosine frequency (Hz) 11x1=2*cos(2*pi*f1*t1+pi); %signal segment for first half 12z=[x0 x1]; %concatenation of 2 cosines 13[Z,f] = ak_spectrum(z, Fs); %spectrum for real-valued signals 14subplot(211) 15plot(f,20*log10(abs(Z)) + 30); %add 30 to convert dBW into dBm 16ylabel('Magnitude (dBm)'), axis([0, Fs/2, -20, 100]) 17subplot(212) 18plot(f,angle(Z)); 19xlabel('Frequency (Hz)'); ylabel('Phase (rad)')

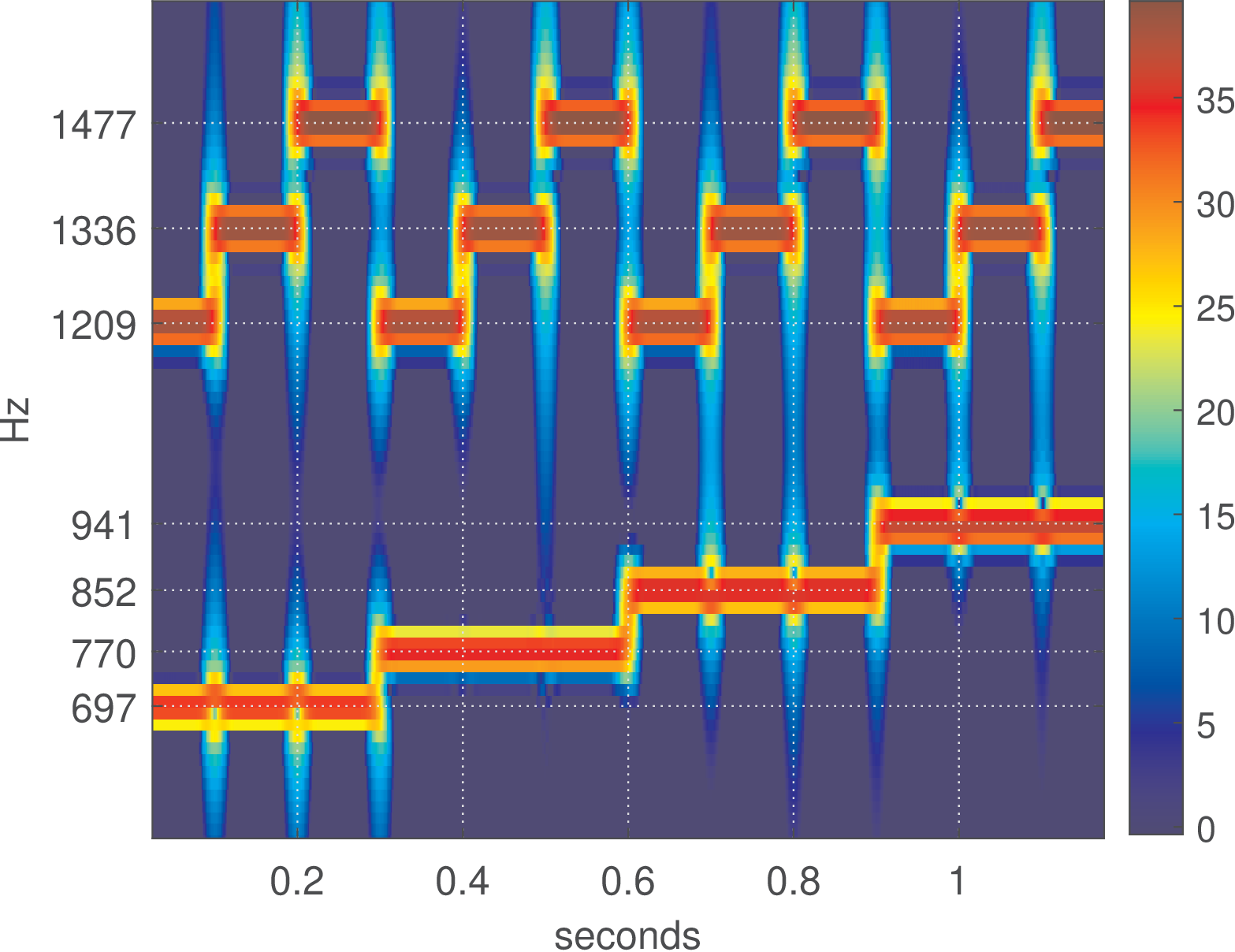

As another example of how spectrograms are useful, Figure 1.10 shows a sequence of all twelve dual-tone multi-frequency (DTMF) tones generated by the script figs_spectral_dtmf.m. In this case, each symbol has a 100 ms duration. It is possible to visually decode the signal. For example, the first symbol (left-most) is composed by a sum of sines of frequencies 697 and Hz (representing “1”) while the second is composed by frequencies 697 and Hz (symbol “2”) and so on.

After creating dtmfSignal with kHz, the spectrogram of Figure 1.10 was generated with the commands below, and for a better visualization, the dynamic range was restricted to 40 dB via the parameter thresholdIndB:

1filterBWInHz=40; %equivalent FFT bandwidth in Hz 2samplingFrequency=8000; %sampling frequency in Hz 3windowShiftInms=1; %window shift in miliseconds 4thresholdIndB=40; %discards low power values below it 5ak_specgram(dtmfSignal,filterBWInHz,samplingFrequency,... 6 windowShiftInms,thresholdIndB) %calculate spectrogram